Last Tuesday night I sat in my apartment in Toronto and listened to myself read an essay I'd written that morning.

Except I wasn't reading it. I was eating dinner. The voice coming out of my laptop was an AI clone of me, running on a machine under my desk.

No API. No subscription. No one else's servers.

Just my voice, or something close enough that nobody can tell the difference, generated by open-source models on hardware I bought once and will never pay for again.

Three months ago, I almost signed up for ElevenLabs.

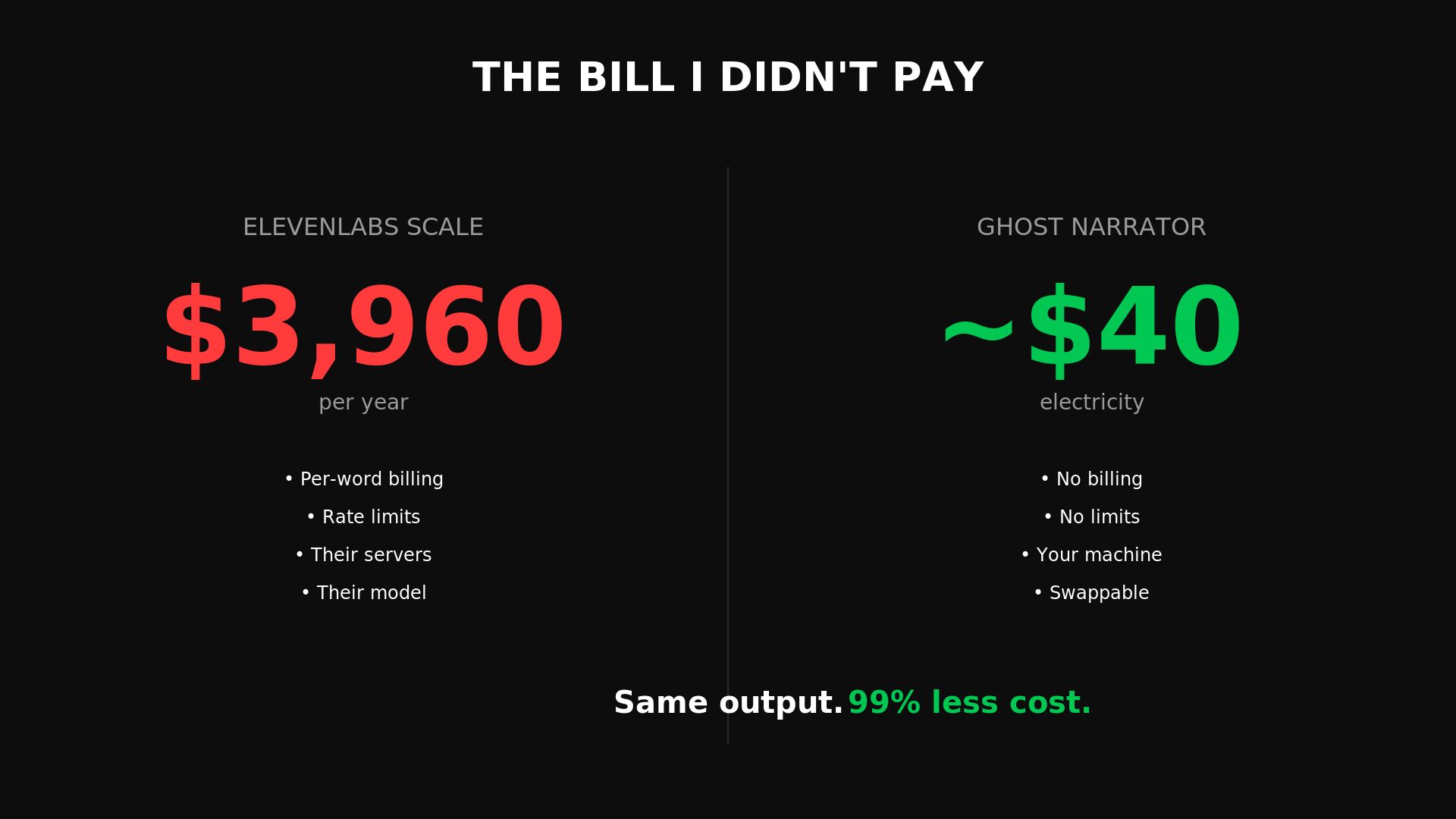

$330 a month. $3,960 a year. Per-word billing. Rate limits. Their servers processing my voice, my content, my words.

I built my own pipeline instead. And what happened next broke every assumption I had about where this industry is going.

The Bill I Almost Paid

We published about 200+ blog posts on Founder Reality. Every one gets a narrated audio version — you hit play and hear me read it to you.

At that scale, ElevenLabs' Scale plan was the obvious answer. The industry standard. The thing everyone uses. Nobody questions it.

$330 a month. Per-word. Rate-limited. Audio processed on someone else's servers. If you go over your character credits, $0.18 per thousand characters on top.

ElevenLabs is not a small operation. $330 million in annual recurring revenue. $11 billion valuation as of February 2026. 41% of Fortune 500 companies use them. Sequoia led their last round. a16z quadrupled their position.

They're genuinely good at what they do. Best voice library in the industry — 5,000+ voices, 70+ languages, enterprise SLAs that matter for compliance.

I almost signed up. Then I tried something else.

Ghost Narrator

We ran open-source models on a laptop.

A local LLM to rewrite blog posts into natural narration scripts.

An open-source voice cloner to generate audio using a clone of my voice.

The whole pipeline running locally.

No API calls. No per-word billing. No rate limits. Nothing leaving my machine.

It sounded fine. Not perfect. Fine. Good enough that nobody listening would think twice.

So we built it into a system, open-sourced it, and called it Ghost Narrator. It's been running our narration pipeline for months. The blog post about it went up in March.

Then we hit a problem.

The Licensing Trap

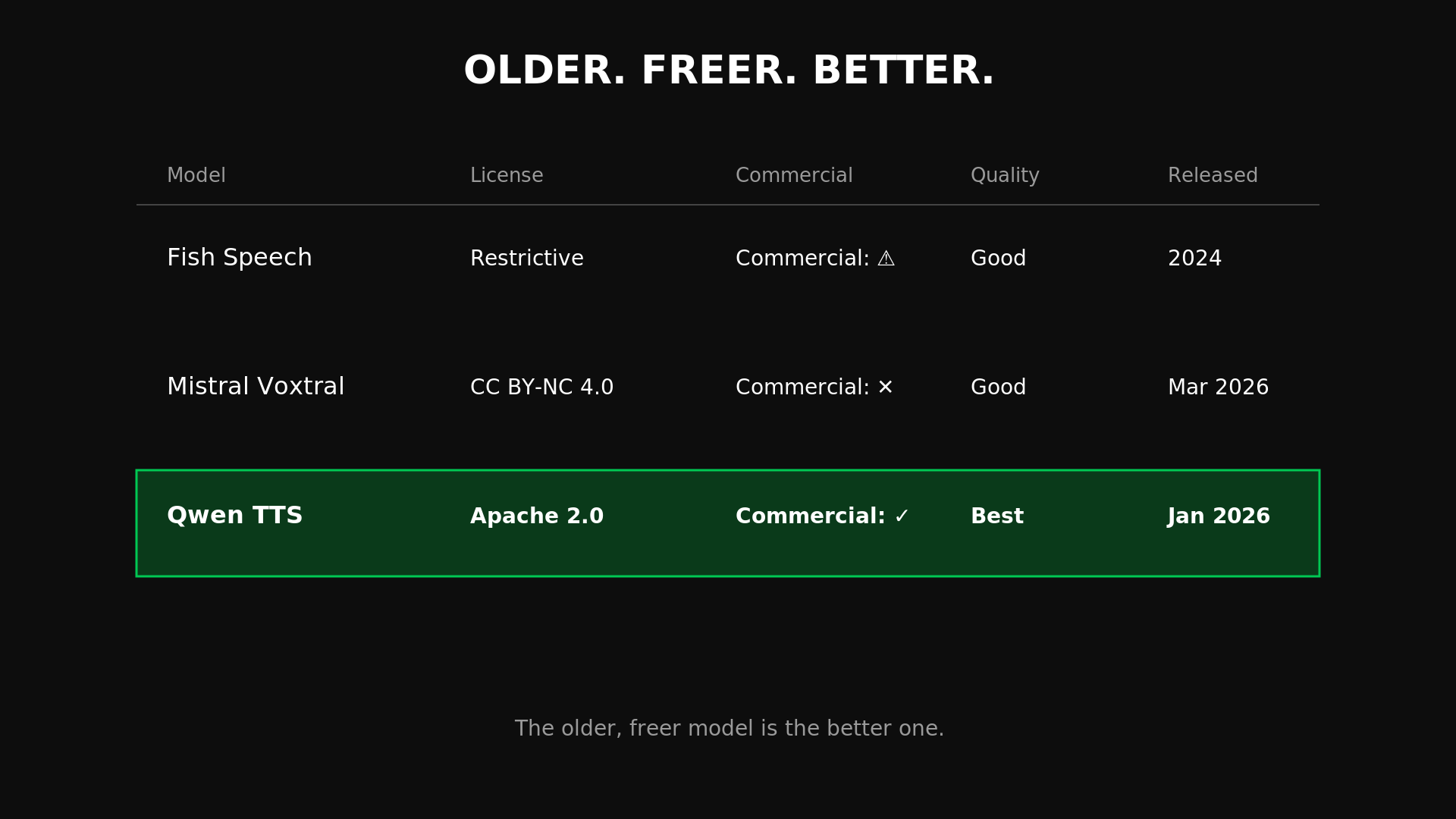

The voice cloning model we originally used — Fish Speech — had a restrictive license. We were publishing 200 narrated posts a month on a model we couldn't fully commercialize.

So I tested alternatives.

The obvious move was Mistral.

They'd just released Voxtral TTS — a 4-billion-parameter model, press everywhere, backed by one of Europe's best-funded AI labs. New. Shiny. Getting attention.

I tried it. The license? CC BY-NC 4.0. Non-commercial.

Let me be specific about what that means.

If you download the model weights and use them to build anything that generates revenue, you need a separate commercial agreement with Mistral.

The "open-source" framing is marketing. You're still renting.

Then I tested Qwen TTS.

Apache 2.0. Fully free. No restrictions on commercial use, modification, or redistribution.

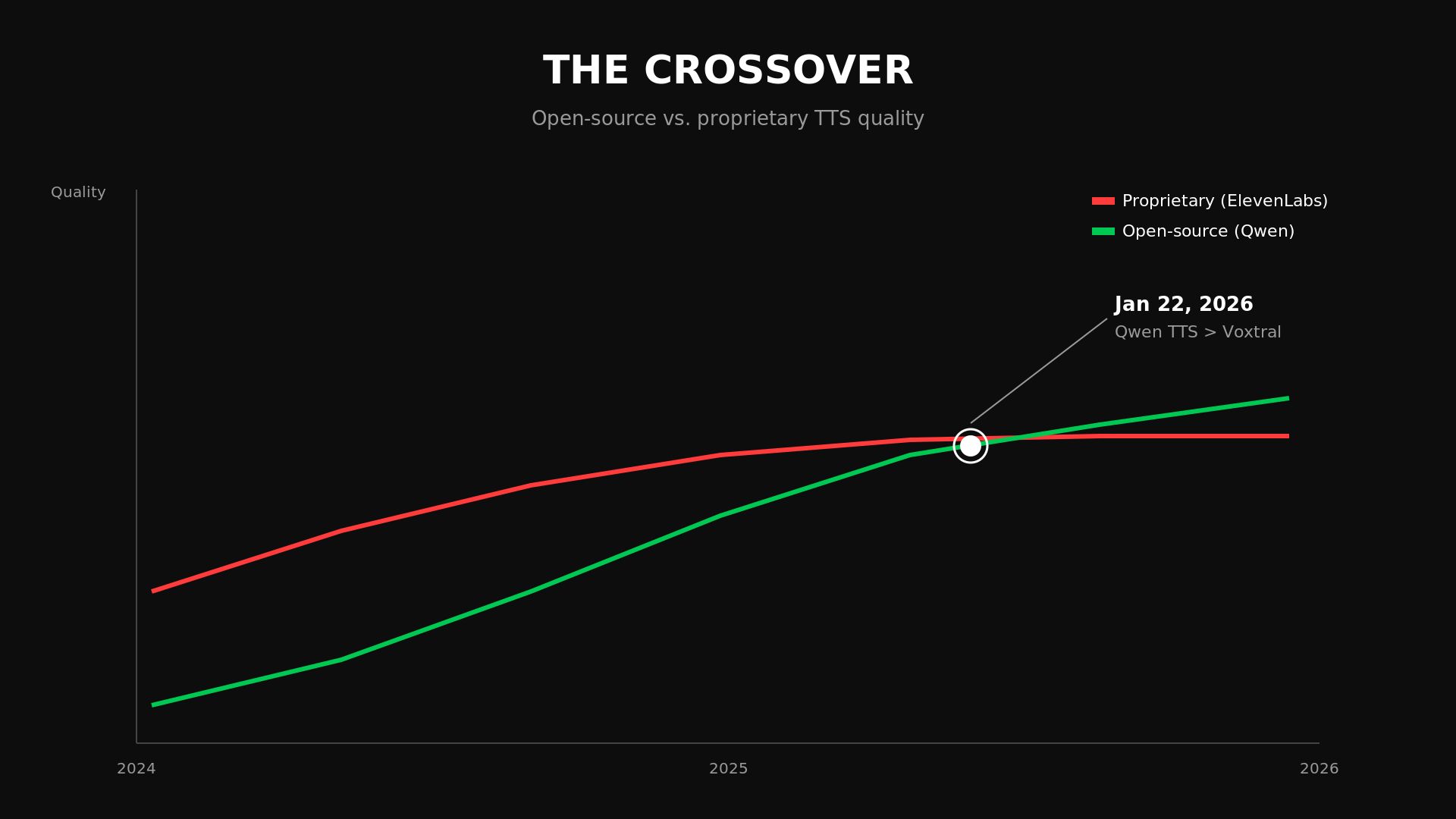

Released January 22, 2026 — two full months before Mistral's model.

And here's where I sat there genuinely shocked.

The voice quality is significantly better. Not marginally. Not "if you listen carefully." Significantly better.

An older model. From Alibaba's Qwen team. More permissive license.

Runs on a $300 GPU. Sounding better than the newer, more restricted alternative from a lab that raised hundreds of millions of dollars.

Older. Freer. Better.

I swapped it into Ghost Narrator in an afternoon.

Same pipeline. Same workflow. Same hardware. The only thing that changed was the model producing the audio.

The quality went up. The cost stayed at zero.

(Here’s an audio example of me narrating under Qwen TTS)

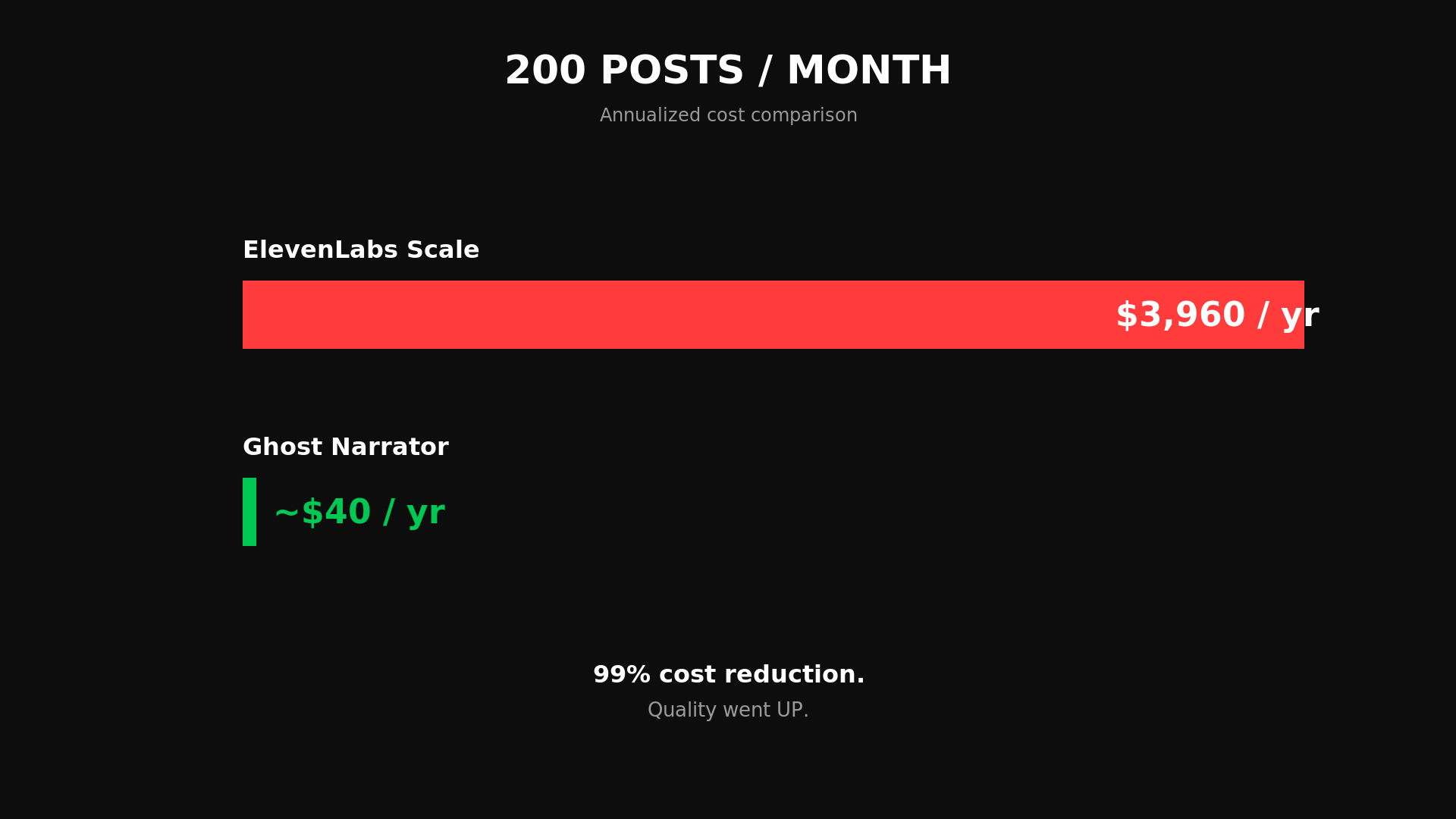

The Numbers

Let me put this side by side.

ElevenLabs Scale plan: $3,960 a year.

Per-word billing. Rate limits. Their servers. Their infrastructure.

If they change their pricing, you pay more.

If they change their model, you get what they give you.

If they go down, your pipeline goes down.

Ghost Narrator with Qwen TTS: electricity. One-time hardware purchase.

No billing. No rate limits. Nothing leaves your machine.

If a better model comes out tomorrow, you swap it in. The pipeline doesn't change. The quality just goes up.

That's the comparison for our scale — 200 posts a month.

But here's what makes this bigger than my blog.

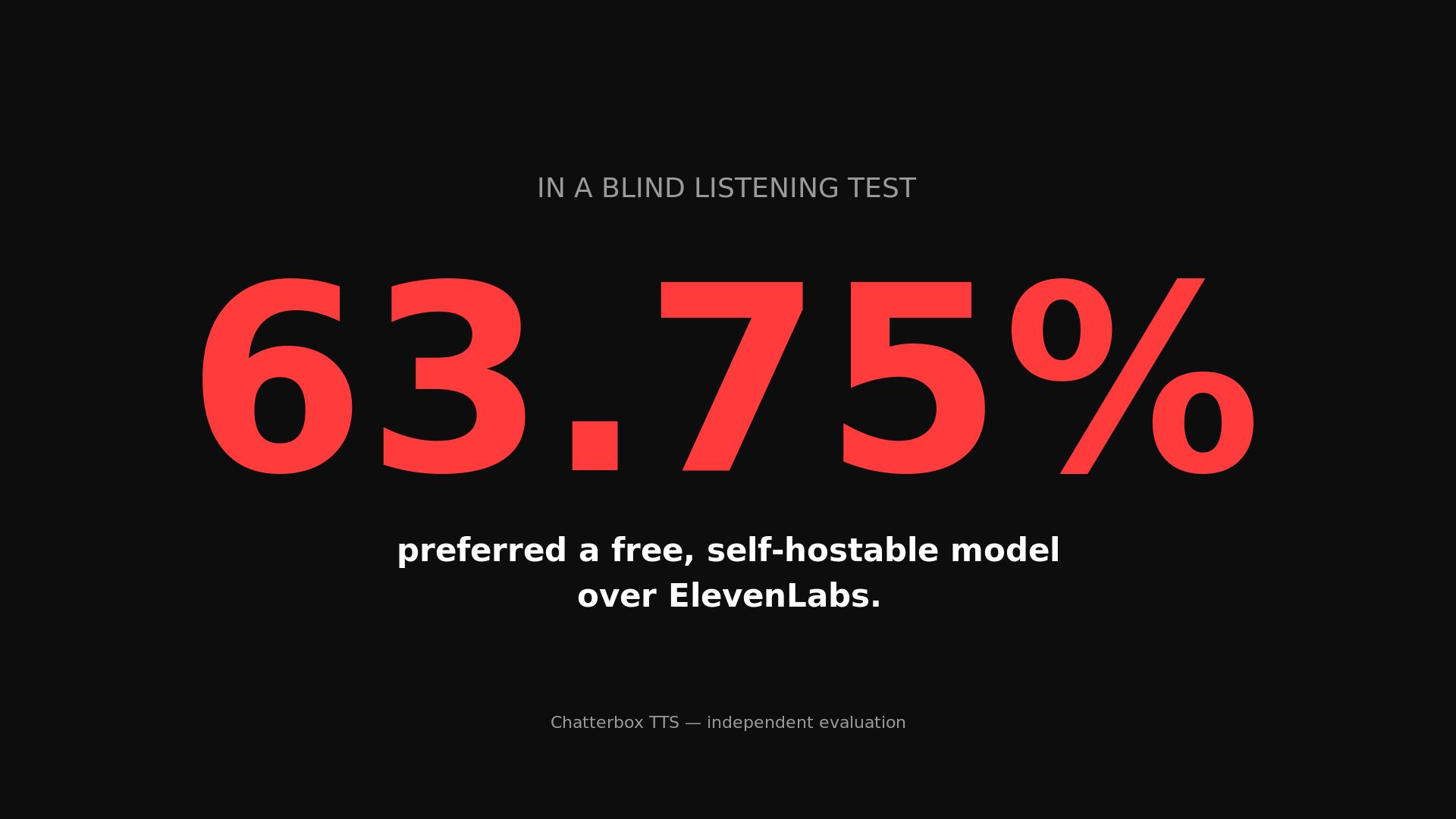

In a blind listening test run by an independent evaluator, 63.75% of listeners preferred a free, self-hostable model called Chatterbox over ElevenLabs.

Let that sit for a second.

The $11 billion company.

The one 41% of the Fortune 500 uses.

The one that raised $500 million in February.

Losing a blind quality test to a model anyone can download and run on a laptop.

The quality moat is gone. What ElevenLabs still has — the 5,000 voices, the 70 languages, the enterprise SLAs, the integrations — those are real.

But they're not what most people are paying for. Most people are paying for voice quality. And voice quality just became free.

The Pattern

This is not a TTS story. This is an everything story.

On the major LLM benchmarks, the quality gap between open-source and proprietary models went from 17.5 percentage points to 0.3% over a single year.

DeepSeek R1 matched OpenAI's o1 performance at an estimated 15x lower training cost — $5.6 million versus $100 million.

Full parity across the board is projected by Q2 2026. That's eight weeks from now.

In image generation, it's already here. In text-to-speech, it's already here.

The moat for every AI company charging for API access was always the same thing:

The delay between when open-source reaches commercial quality.

That delay used to be two years. Then one year.

In TTS, it went negative — the older, free model is better than the newer, restricted one.

Software stocks have lost over $1 trillion in market cap in 2026. Asana hit all-time lows.

Monday.com lost 70% from its highs.

Per-seat SaaS pricing dropped from 21% to 15% of SaaS companies in twelve months.

The model is structurally breaking.

The companies most at risk aren't ElevenLabs or OpenAI.

They have real moats — data, ecosystem, enterprise relationships.

The companies at risk are the layer built on top.

The first-wave AI SaaS companies. Content generation tools. Code assistants. Marketing copy platforms.

They resell AI with a thin UX layer, and that layer erodes the moment the model underneath becomes free.

And it keeps becoming free.

The Cautionary Tale

There's a company called Coqui AI that I think about a lot.

Coqui spun out of Mozilla in 2021 to commercialize open-source TTS.

They built genuinely impressive technology.

Their models were being called over 100,000 times a day.

Developers trained over 1,000 custom models daily using their code.

Their XTTS model hit a million downloads in a single month.

They raised $3.3 million total.

They shut down on January 3, 2024.

Massive adoption. Zero monetization.

The open-source nature of the product made charging for it nearly impossible.

The code still lives on — GitHub repos public, community forks continuing. But the company is gone.

Usage and revenue are different numbers. Confusing them destroys companies.

The lesson is brutal: if you're building on open-source AI, you need a moat that isn't the model itself.

Proprietary data. Regulatory lock-in. Network effects. Something.

Because the model is a commodity now, and it gets more commoditized every month.

Why I Built It This Way

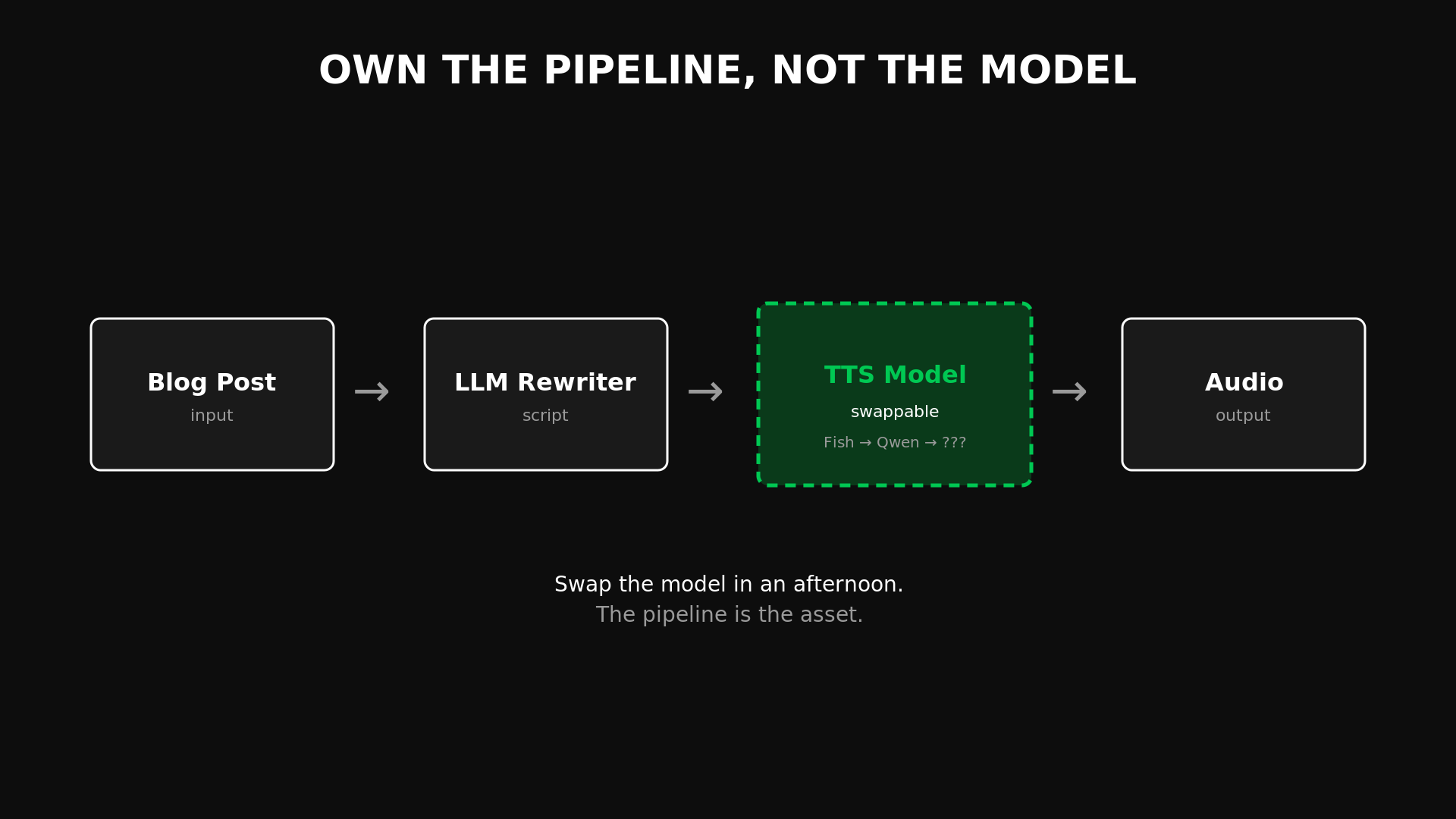

When we designed Ghost Narrator, we made one decision that turned out to matter more than anything: modularity.

The TTS model plugs in and out. We didn't build around Fish Speech.

We didn't build around any specific model.

We built around the assumption that models would keep getting better and we'd want to swap them.

That bet paid off in three months instead of three years.

This is the thing I keep telling founders, and it's the thing most people skip past because it sounds abstract until it saves them money: own the pipeline, not the model.

The model is a commodity.

The pipeline — the architecture that takes an input, processes it through whatever model is best today, and produces an output — that's what you own. That's what compounds.

When Qwen TTS turned out to be better than everything else we'd tested, the swap took an afternoon.

If we'd been locked into ElevenLabs' API — content flowing through their infrastructure, voice profiles stored on their servers, billing tied to their character credits — switching would have been a project.

A migration. A risk.

Instead it was a config change.

What I'm Actually Telling You

I'm not telling you to cancel ElevenLabs. If you publish 10 blog posts a month, their Starter plan at $5 is probably the right answer. The convenience is worth it.

I'm telling you that the math changes fast.

At our scale, we'd be paying $3,960 a year for something we now run for the cost of electricity. Not with a worse model. With a better one.

And I'm telling you this pattern — paid API today, free and better tomorrow — is happening across every AI category simultaneously.

The TTS market is $4.25 billion in 2025, projected to hit $8 billion by 2030.

The voice cloning market alone is $2.4 billion. Real markets. Real money flowing.

And the open-source floor under all of them is rising faster than the commercial ceiling.

A year ago, the question was: which AI API should I pay for?

The question now is: how long until the free version is better?

In TTS, the answer is: it already is. You just don't know it yet.

We're going to keep documenting every swap.

Every time a paid tool gets replaced by something we run locally, we'll write about it here and open-source the replacement.

Not because we're against commercial software.

Because the math is changing faster than people realize, and I'd rather show the receipts than argue about it.

Ghost Narrator is open-source: github.com/getsimpledirect/workos-mvp

Listen: Every article on Founder Reality is narrated by Ghost Narrator.

Hit play on any post. That's what it sounds like.

P.S. We’re swapping models, therefore the first few articles may not have audio yet, go a bit further and let me know how do you think the quality is!